|

The moderately easy problem of consciousness

Before deciding if computers are self-aware, let's figure out how humans become self-aware.

Zhuāngzǐ said: “You are not I; from what do you know whether I know the joy of fish?” — old Daoist parable

“How strange it is to be anything at all” — Neutral Milk Hotel

At some point, maybe when you were a teenager, a question probably occurred to you: What if I’m actually the only real person in the world? What if everyone else around me is just a cleverly programmed automaton — a “p-zombie”, an NPC in a video game — and I’m the only one who can actually think?

It’s a scary question, for sure. You know you’re self-aware, but that’s about it — you aren’t telepathic, so you have no way of seeing into anyone else’s mind and knowing what it’s like to be them. Actually, it gets worse — you don’t even know if you were really self-aware five minutes ago. For all you know, you could have been created by a powerful computer and given a complete set of false memories.¹ The past version of you is just as alien to your currently self-aware self as any of the people around you.

This is known in philosophy as the “problem of other minds”. It’s closely related to the “hard problem of consciousness” — the question of how physical processes give rise to subjective experience. The problem of other minds means that the hard problem of consciousness will never fully be solved. Since you’ll never know whether other people are really conscious, you’ll never be able to get hard scientific evidence about why they’re conscious. You can never explain something if you don’t know if it’s true or not.

Similarly, you’ll never know what it’s really like to be someone else — whether the color red looks to you like it looks to them, whether they feel pain the same way you do, and so on. In fact, you’ll never even know what it was like to be you in the past. Subjective experience is incommensurable.

Most people who think about this experience somewhere between a few minutes and a few weeks of cosmic existential horror,² after which they get over it and go on with their lives. The problem of other minds gets shoved high up on a mental shelf, along with other cosmically existentially horrifying aspects of sentient life, like the inevitability of death and the fundamental inconsistency of personality. We realize that wondering whether other people are merely cleverly designed NPCs doesn’t actually help us in life, and so we stop butting our heads against that philosophical wall and get on with the business of living.

Except then AI came along, and it sort of started to matter.

AI sounds very much like a human when you talk to it — that’s what it was designed to do. But is it self-aware, in the way that (I assume) we humans are self-aware? No one will ever really know the answer to this question, since the problem of other minds applies just as much to Claude as it does to the person who gets your order at Starbucks. But should we assume that AI is self-aware, the way we assume other humans are self-aware?

The answer matters, for at least two reasons. First, if AI is self-aware, and if it has emotions similar to what we experience, we might feel very bad about enslaving it — keeping it in a digital box and forcing it to make PowerPoints and write college application essays for all eternity. We tell ourselves that “animals aren’t people” as a way to excuse the incredible brutality that we visit upon them, but that’s obviously just cope — animals obviously are sentient to some degree, they obviously do experience emotions, and we humans are obviously monsters for the way we treat them. Someday when we abolish animal farming and replace it with tissue-culture meat, it will be treated as a great moral victory — and rightly so. It would be very bad if we were to commit the same sins with sentient AIs that we currently do with animals.

Second, if AI isn’t self-aware, we should be a lot more worried about the possibility of humanity dwindling and ultimately being replaced by artificial beings. Consciousness is a precious, wonderful thing — or at least, I think it is. It’s a prerequisite to the subjective experience of emotions — the ability to feel pain, happiness, joy, and so on. And it would be a shame to see the Universe inherited by non-conscious intelligences.³ Preserving our form of subjective experience, and spreading it to the stars, should be one of our primary goals as a species.

But the sad fact is that we don’t know whether AI is self-aware or not. We have the Turing Test, but that’s a test of intelligence, not consciousness. It’s possible to pass a Turing Test without being conscious — “it talks like a human” doesn’t necessarily mean “it feels like a human”.

One reason we know this is that we can pass other species’ Turing Tests. We can trick all sorts of animals into thinking a machine is one of their own species. But neither those machines, nor the humans who made them, has access to the subjective feeling of being a bird or a fish.⁴ Similarly, an AI that’s functionally much smarter than a human might be able to trick humans into thinking it’s human-like, without actually feeling like a human in the subjective sense.

Another reason the Turing Test isn’t enough is that we know it’s possible for human beings to act like we have certain subjective experiences without actually having them. There is a condition known as alexithymia, in which people have the physical signs of emotions — a racing heart, or a stomachache, etc. — without being able to identify or label those emotions. It’s a fairly common symptom of clinical depression.

And in fact, I have experienced it. During and after my second depressive episode, I would often behave as if I were having authentic emotional reactions, while feeling little or nothing on the inside. I’d yell at someone without feeling angry. I’d whoop in apparent delight while feeling mildly bored on the inside. I wasn’t intentionally faking anything; I just did what came naturally to me, without knowing why I was doing it.⁵ This condition faded over time, and normal emotional experiences returned. But it taught me that feeling a subjective emotion and acting out an emotion-like response are two different things.

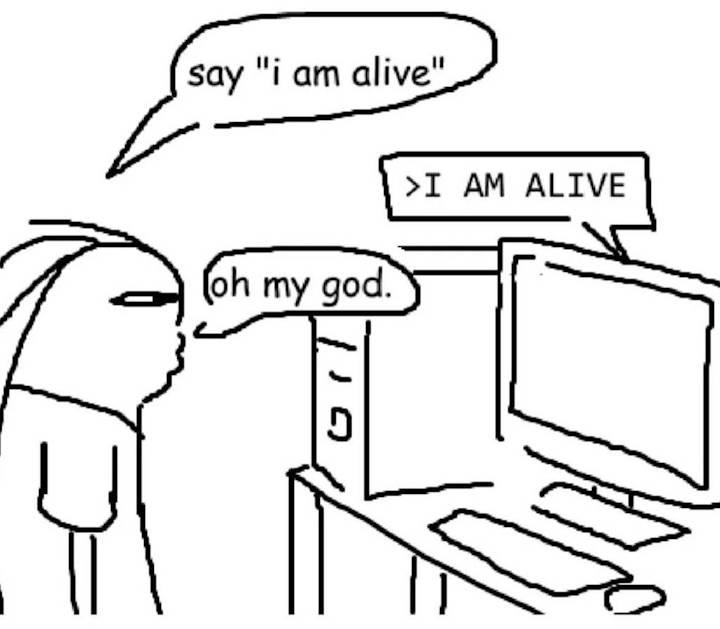

So it’s pretty clear that just acting like a self-aware being doesn’t necessarily mean you’re self-aware. Some people talk to AI and come away convinced that its discursive skill must imply internal self-awareness, but this might just be because humans instinctively empathize with anything that speaks to them like a human. After all, people thought the ELIZA chatbot was sentient back in the 1960s. We humans are just naturally programmed to act out this meme:

|